WTF is Your Metric Doing to Your Customers?

May 10, 2019

Originally posted on Medium via UX Collective.

In this series…

- Part 1: WTF is a Metric? — introduction to the basics of metrics, what it is, and why it matters.

- Part 2: 4 Steps to Defining GREAT Metrics for ANY Product — how to develop a metric from scratch.

- Part 3: WTF is Your Metric Doing to Your Customers? — how to avoid the unintended consequences of the “one metric that matters.”

Let’s start with a few news stories over the past years…

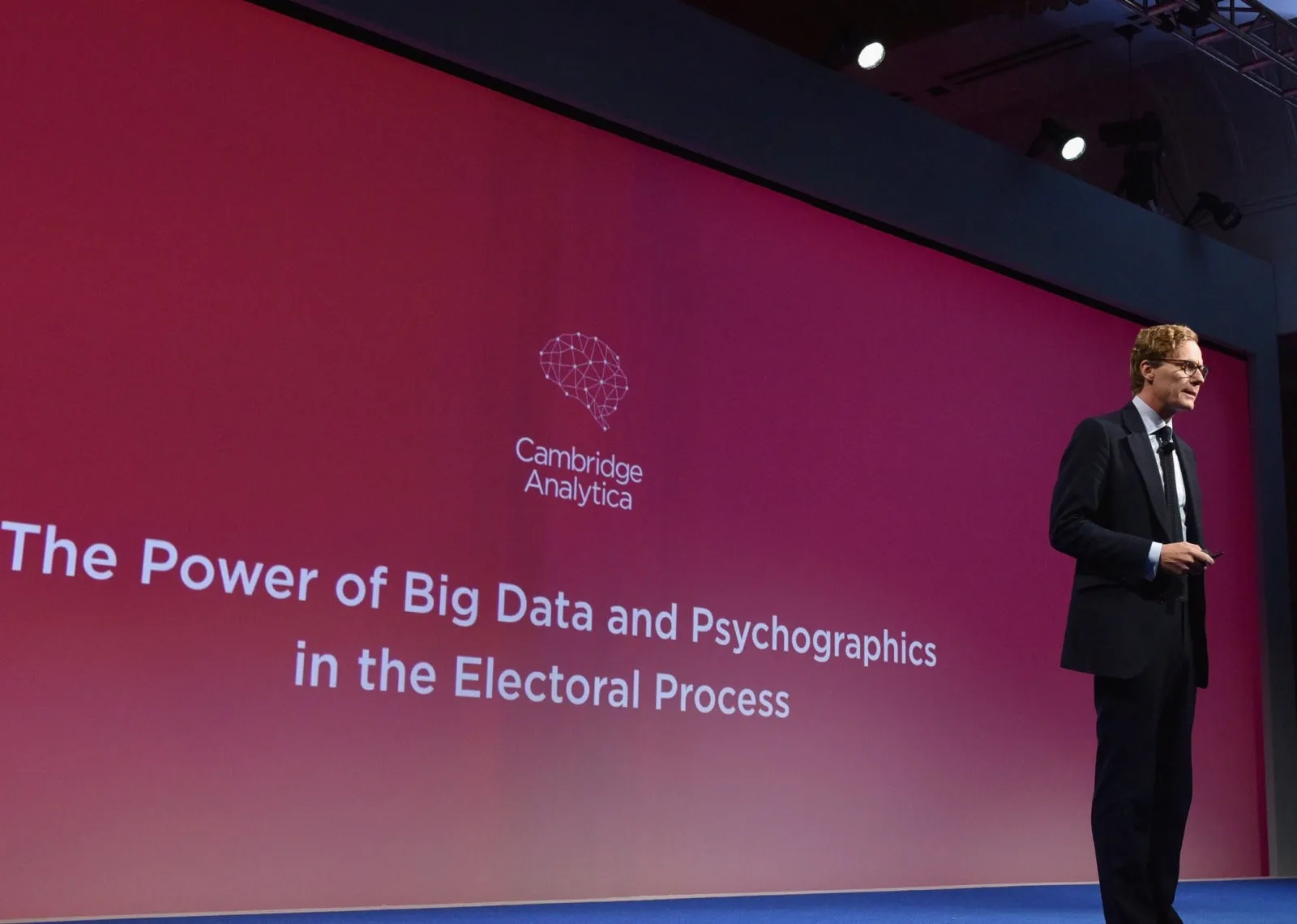

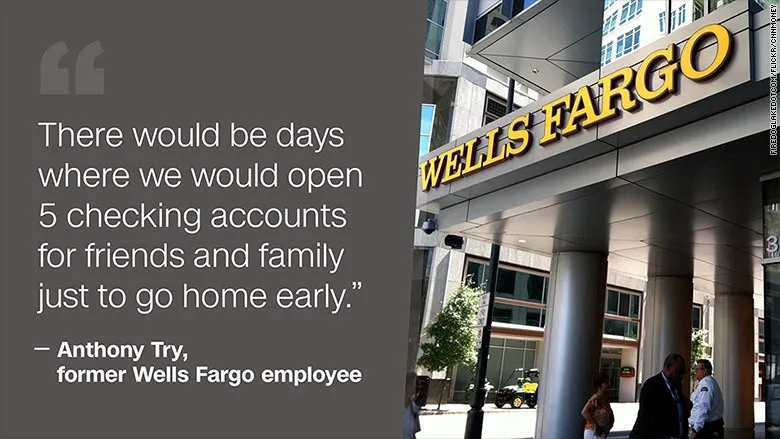

In 2016, Wells Fargo was fined $185 Million for opening more than 2 Million fake accounts. As a result, 5,300 employees were fired for “Improper Sales Practices” due to Well Fargo’s aggressive sales targets.

United passenger David Dao Duy Anh. Photo from YouTube. See video from Business Insider via YouTube.

In 2017, a Vietnamese-American pulmonologist was violently dragged off United Airlines Flight 3411 when United needed to “re-accommodate” the customers so they could fly four of their own crew members to staff another flight. This shined a harsh light on the common airline practice of overbooking, wherein airlines sell more seats than available to ensure a plane is full at take-off even if passengers cancel last-minute.

In 2018, it became known that Cambridge Analytica had been harvesting data from 87 Million Facebook users for political campaign purposes using 3rd-party Facebook apps. In the years leading up to this, Facebook’s laser-focus on revenue resulted in a 7-fold increase in their stock price over a 5-year period. This was due to ad revenue derived directly from user engagement (read: addiction and outrage) and supported by diverse content developed by 3rd parties who enjoy Facebook’s ability to grant them access to 2 Billion+ users. This is a scandal that gave us both admission from Facebook’s CEO that “It was my mistake, and I’m sorry. I started Facebook, I run it, and I’m responsible for what happens here…” as well as the Zuckerberg Zmirk™:

“Senator, we run ads…” Photo from CCN. See video on Youtube.

What do all of these stories have in common?

Metrics: A Double-Edged Sword

There’s an old adage made famous by Bill Hewlett of Hewlett Packard:

You cannot manage what you cannot measure… and what gets measured gets done.

Similar to how social media has shown us people are predisposed to tracking how many “likes” they get on their latest post [1], Bill Hewlett understood the combination of instrumentation, measurement, and goal-setting is a powerful organizational driver and motivator.

Now that metrics have become integral to a company’s or product’s performance management, incredible focus is placed on the key performance indicators (KPIs) we set for ourselves and our teams. On a weekly, daily, hourly, or even minute-by-minute basis, teams obsessively check their analytics suites, dashboards, and leaderboards to see whether their actions have moved the needle on KPIs like daily new user growth, average minutes of engagement per user, or revenue per user.

However, this focus comes at an expense.

While not all teams that rally around a single business-focused metric will become myopic, it’s not difficult to imagine how easily this becomes the case. Managing against the “one metric that matters” runs the risk of tunnel vision while everything else suffer.

This is the double-edged sword of metrics that gives rise to their unintended consequences.

“But We Would Never Do That…!”

I’m sure the 5,300 employees at Wells Fargo thought so as well. It’s safe to say very few of those at Wells Fargo consider themselves “bad people” that wake up each morning with the explicit intent of harming their clients. However, given tough quotas and earning bonuses, that’s exactly what they did.

Unfortunately, no product or organization is immune to unintended consequences [2]. If there is a measurement in a system, then there is incentive to game that system. For all the good a metric can do to bring focus to things that matter to you and your product, how certain can you be that the same metric isn’t creating incentives for anyone (customers, employees, partners, leaders) to cheat as an unintended side-effect?

How To Combat The Unintended Consequences Of Metrics

It is much easier to attempt creating a perfectly un-game-able system when you are able to control every aspect of the experience, such as within the virtual world of a mobile game [3]. However, most products and companies operate in the real world with real world consequences, whether intended or unintended. Absent the ability to design perfect systems, we have to find as many levers as we can to ensure customers aren’t impacted at the expense of metrics that only measure business objectives.

In my years of managing products, I’ve noticed there are consistently a few places where we can introduce change to a complex system, namely a) the decisions that are driven by b) the processes who are defined by c) the people.

At each of these points of leverage, there are opportunities to implement safeguards against the unintended consequences of metrics:

- Design direct incentives to influence a decision that may end up hurting customers.

- Establish counter-metric guardrails that are embedded as part of the process.

- Empower a culture of accountability towards your customer among all people within your community.

More on each below…

1. Design Direct Incentives

Among the simplest methods to encourage good behavior or avoid bad ones is simply to create rules around them. This is an approach where you either create high-cost punishment as a deterrent or a high-value incentive as a reward.

The benefits with direct incentives/disincentives is that they are relatively easy to construct and understand. You can apply rules and incentives on top of any system you’d like, regardless of whether the core of the system is operating efficiently or not. However, by this nature, direct incentives and disincentives can also be band-aids — one-off solutions that overlay on top of the core issues.

Both incentives and punishments can spur people into action that are rewarding in different ways: functionally (“I get/lose something of value, like money”), socially (“I will look good/bad in front of others”), and emotionally (“I feel good/bad having done a good/bad thing”).

However, punishment-based disincentives require that you are proactive and deterministic, which can become inefficient. You have to anticipate and explicitly identify the negative behavior and create rules and punishments as a means to incite fear. If rules are poorly designed or not comprehensive enough, ill-intended parties may find a loop-hole.

Nudge: Improving Decisions about Health, Wealth, and Happiness by Richard H. Thaler and Cass R. Sunstein, 2008.

In recent years, the popularization of behavioral economics books such as Nudge have brought incentive systems to the forefront of product management and user experience design.

Unfortunately, a poorly designed incentive can also create more problems.

Case In Point… United Flight 3411

For airlines, the systemic pressure to be profitable drives this margin-conscious industry to nickle-and-dime their passengers as well as overbook their flights. Without fixing the fundamental issue of overbooking, a high-reward band-aid is to get passengers to give up their seat in exchange for flight vouchers.

In the case of the Flight 3411 Incident, all the passengers aboard were initially offered US$400 flight vouchers. When no one volunteered, US$800 flight vouchers were offered in their place. Only when no one took them up on the second offer did United select 4 passengers at random, subsequently dragging one of them off against their will.

The thing that no one wanted. Photo from imged.com.

From United’s perspective, this seemed like a fair way to offer a high-reward incentive. However, this highlights the shortfalls of this band-aid and the need for designing incentives thoughtfully:

- When the US$400 voucher offered, it only took a few moments of silence for the plane full of passengers to second-guess the value. After all, who wants to to be the sucker that singles themselves out to raise their hand and accept the lowest bid?

- By the time the US$800 is offered, everyone was already in no-sucker mode, “I better wait to see if they will offer even more.”

This approach was only functionally rewarding, not at all socially or emotionally satisfactory.

I have observed other airlines practice a much better voucher process. Once on a Southwest flight, they announced to passengers “Raise your hand if you’d like to give up your seat on this plane to receive a US$2,000 voucher!” Inevitably, more than 4 people raised their hands, by design. The airline then gathered the small group and asked… “we only have 4 vouchers, keep your hand up if you’re still interested in giving up your seat for US$1,500… US$1,200… US$800…” until only 4 hands were left.

This improves on the United system in all the key ways:

- It’s emotionally framed as a game rather than a chore,

- socially, it sends a strong signal that the reward is in high demand, i.e. no one is left feeling like they were a sucker to taking the deal, and

- functionally, it ensures the participants value (i.e. the voucher they get) is the maximum amount rather than leaving people guessing whether they accepted too early [4].

As mentioned, even if the incentive design were flawless, it may have avoided the worst of the Flight 4311 Incident but by no means is it fool-proof. As long as airlines operate in a system that is inherently biased towards the business metrics of “airline seat utilization” at all costs, some version of this story will continue to repeat. Therefore, it’s necessary to consider additional measures.

2. Establish Counter-Metric Guardrails

Since business success such as revenue and profitability are much easier to measure than the holistic health and well-being of your customer, it is almost natural that a typical collection of metrics leans heavily towards the health of the business rather than the health of your customer. This is especially true for public companies that are evaluated based on “maximizing shareholder value.”

In order to counteract this tendency, we need to find clever ways to create a collection of counter-metrics. For every business health metric that is established, there should be a counter-metric established to a) recognize the metric’s potential positive AND negative impact to customers and b) balance out its effects.

This idea of a counter-metric is not new. The concept has likely existed for a long time, but became a part of the product management lexicon through mentions by author and Facebook Product Design VP Julie Zhuo, who uses the term “counter-metric” in 2016 and beyond, with some usage through other writings (note: almost everyone I know who speaks of “counter-metrics” has worked at Facebook at some point).

A simplified process to develop a counter-metric:

- Recognize your product exists to create customer value AS WELL AS business value. I covered this in Part 2 as the first step of creating a metric.

- Anticipate trade-offs a single metric may create. An example of how to do this is to imagine, in the extreme scenario, what would happen if only the single metric is achieved.

- Balance out the trade-off by introducing another metric that measures the other side of the story. An example of a common trade-off in user acquisition marketing: quantity often comes at a cost of quality, so ensuring a counter-metric exists to measure quality (conversion rate/churn) will help balance the incentives to maximize a quantity metric (new users per day) [5].

The usage of counter-metrics in your product is a fundamental improvement over the “band-aid” incentive approach because it includes the checks-and-balances in the definition of success for your product. Because so much of the product development process is driven by metrics (quarterly goals, OKRs, etc), baking counter-metrics into your dashboard can create accountability in an explicit and transparent way. This provides a robust foundation for product managers, system designers, and decision-makers to optimize and advocate for competing metrics while maintaining a healthy balance.

Case In Point… Facebook-Cambridge Analytica

While we don’t have visibility into how Facebook actually uses metrics and counter-metrics, we can assume the key business driver is revenue growth (easy to measure), which should be balanced with a counter-metric for privacy and customer data security like a “privacy score” (hard to measure). Such a score would uphold the integrity of privacy and prevent future “Cambridge Analytica” incidents [6][7]. A privacy score may look something like this at various levels:

- App-Level/Developer-Level Counter-Metric —an app- or developer-level privacy score can measure and show the degree of possible private data exposure when a user approves a 3rd-party app or developer. With this, a) users should see the score when they install a 3rd-party app so they can decide whether they want to share data, b) Trust & Safety moderators could flag apps or developers that have abnormally low scores, and c) developers would be incentivized to keep a good privacy score by only asking for the data permissions they need.

- User-Level Counter-Metric — a user-level privacy score can measure the total privacy exposure for a given user, aggregated across all installed apps. Paired with a privacy dashboard feature, users can see how their exposure compares to other average users as well as which apps have the most impact.

- Platform-Level Counter-Metric — a platform-level counter-metric can measure the total privacy exposure on a platform level, aggregated across all users and apps.

CEO of Cambridge Analytica speaking at a conference in 2016. Photo from Engadget.

Nothing is fool-proof — as people who mean to do harm will always find a way — but counter-metrics as a guardrail could arguably have flagged suspicious activities by Cambridge Analytica and enabled Facebook to move more quickly to ban them before it was too late. More generally, a counter-metric would make it harder for bad actors to thrive as well as limit the time and scope of negative consequences.

3. Empower A Culture Of Accountability

Culture is one of the most powerful, intangible forces in a community. As defined, culture is a “set of shared attitudes, values, goals, and practices that characterize an institution or organization.” In this sense, a culture of accountability equates to an established set of norms around what’s right and an expectation that someone will call out bad behavior when they observe it. See something, say something.

Culture is incredibly powerful [8]. If you can build a culture of doing the right thing and provide mechanisms within your ecosystem to promote that culture, you have a built-in safeguard.

Culture starts with leadership articulating values (establish “what we believe is right and well”), modeling behavior, and sharing and repeating the message with consistency. Subsequently, ensuring that everyone within the community can hold stewardship over the culture and is empowered to act on behalf of the shared values creates robust accountability (e.g. the power to act in the interest of the customer by giving refunds without further approval or report bad behavior in a safe and secure manner without fear of repercussions). If the bad behavior is observable, it should be called out and publicly addressed as soon as possible [9][10].

Changing culture is hard. It’s difficult to succinctly articulate culture, and even more difficult for people to adopt a new set of norms. And while culture takes a long time to establish, it only takes an instance to destroy. Bad behavior seen at the leadership level is utterly demoralizing; few things are as deflating and demotivating as seeing respected leaders knowingly take a nefarious position or turn a blind eye [11].

Absent of the culture of accountability, bad behavior can go unnoticed and unchallenged. Given the pressure of business objectives and “success at all costs,” bad behavior may even be encouraged.

Case In Point… Wells Fargo Fake Accounts

The opening of fake accounts was a symptom of the aggressive sales culture enabled by the highest level of the organization. If revenue KPIs keep going up, anything goes.

Photo from CNN.com.

When wrongdoing is pervasive, you begin to feel like it’s okay. You may even think it might be bad if you don’t participant. Social pressure, FOMO, etc. is very real. The problem is exacerbated when metrics are tied to individual performance. In the Wells Fargo case, not only were employees given impossible quotas on the number of accounts they needed opened (stick), they were compensated monetarily for their efforts (carrot).

While Wells Fargo taken steps to “transform” for the better, the aggressive sales culture reportedly remains. Unfortunately, getting rid of 5,300 people doesn’t change the core culture. Per the New York Times article: “sales incentives have changed, not disappeared.” Even more damning, the article reports “there is a general fear of retaliation for speaking out,” a clear sign the culture has more room for improvement.

The Interplay Between Incentives, Counter-Metrics, And Culture

As it may already be apparent, the three levers across incentives, counter-metrics, and culture are not mutually exclusive. In fact, application of all levers is likely required to guard against unintended consequences on behalf of customer well-being — each operating to advance customer interests in a different way.

Direct incentives establish the norm of what “good” behavior looks like.

Counter-metrics help consistently measure and align high-level business and customer objectives.

A culture of accountability reinforces incentives and metrics.

For Wells Fargo to change their culture, it would require a holistic re-engineering of incentive structures by decoupling individual compensation from business metrics by going commission-free (removal of perverse incentives), a change of guard throughout the leadership as well the implementation of a whistle-blowing mechanism (top-down culture commitment), and a refocus on customer financial well-being (counter-metric).

For United, instead of the crew being praised for their ability to maximize profitability, the company would need to strengthen their values around customer service (culture), surface and regularly refer to 3rd-party rankings of customer satisfaction (counter-metric), and find ways to reward employees who go above and beyond (direct incentives).

For Facebook, it seems to be on its way to establishing a culture of privacy at the leadership level with its latest initiatives, continuing robust and consistent use of counter-metrics, and rethinking how its partners and users can access data.

Mark Zuckerberg clearly, openly, and publicly establishing a new cultural norm at Facebook during its annual F8 Conference, 2019. Photo from Business Insider.

As a product manager and product leader, you wield immense power in setting the metrics, and therefore the goals of your team’s success.

I recently had the privilege of inviting Mary Berk, Product Manager at Facebook / tech industry veteran / PhD in Ethics, to guest lecture in my MBA course. One of the most pertinent takeaways was this:

Design is an exercise in power. Use it well… When you put a product out there, you are co-creating the world… Take the challenge to think beyond your immediate product goals, to the world you’re a part of and shaping. When you put a product out there, you are co-creating the world.

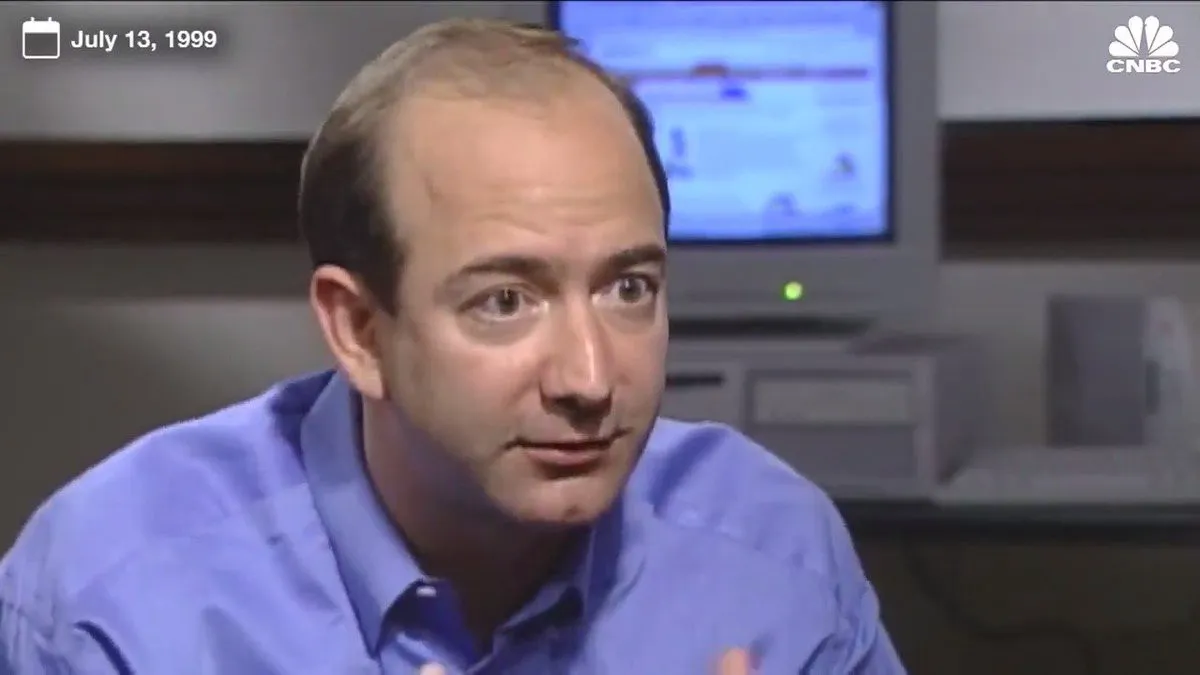

As a product manager it’s important to recognize how products impact customers. Businesses can’t exist simply to maximize shareholder value, as this emphasis can lead to detrimental customer impact. As Jeff Bezos articulated as far back as 1999: “…what matters to me is we provide the best customer experience… in the long term, there is never any misalignment between customer interest and shareholder interest.”

Photo from CNBC.com.

Per the law of unintended consequences, you will never be perfect in anticipating all the possible outcomes, but leveraging some options above to improve the culture, broaden the KPIs, and design better incentives are some ways to start.

NOTES

[1] I personally experienced this a few years back when fitness trackers were the #1 holiday stocking-stuffer. The simple quantification of the number-of-steps-per-day had me circling the block on my way home from work to hit the 10,000 steps arbitrary, preset health goal, even though I had no idea what the benefits of 10,000 steps may be, or whether there was a difference between my 9,900 steps or the 10,000 goal.

[2] A well-documented case of well-intended but detrimental metric in education policy is the standardized testing and test score systems that drive the American education system. Over the course of many years, education in the US had increasingly relied on the standardized test score to manage everything from school funding to admissions to teachers’ salary to policy-making.

[3] Back in my days PM-ing mobile games, the entire cross-functional team was always hyper-sensitive to the systems of measurements and incentives we created for our players. To that end, we maintained a key mindset: If there is an opportunity for a player to gain an unfair advantage, there is a 100% chance someone will leverage that loophole and cheat (and likely be proud of it too). It may sound paranoid, but it’s the only way to ensure the game is fair and no single player can “game the system”.

[4] To really up the social (or emotional) incentive, the airline could have also re-framed the voucher reward with altruism: “We have got 4 crew members here that need to get to Louisville so a plane full of passengers there aren’t left stranded. Can any one of you help them out and get a voucher for your troubles?”

[5] A couple more examples. In freemium monetization: ensuring a depth metric (average revenue per user) is balanced with a breadth metric (% payer). In eCommerce optimization: ensuring efficiency metric (average # of steps to purchase completion) is balanced by an effectiveness metrics (cart conversion/abandonment). The keen analytical eye will note all these examples balance out absolute/volume measurements with relative/ratio measurements.

[6] At the point in time when Cambridge Analytica was extracting private user data through various mechanisms, Facebook’s developers’ terms of service (ToS) likely did not have enough accountability. This is an example where rule-based disincentives can be ineffective without measurement + enforcement. Apple, on the other hand, rules its platform with a consistent iron fist, rigorously reviewing each App Store submission, rejecting apps that violate their terms, and regularly pulling previously-approved apps from the App Store if issues are discovered.

[7] Another social media example would be to develop another counter-metric to user engagement that balances the promotion of polarizing content. This has shown to drive stickiness but has created toxic environments for social media platforms and beyond. Facebook talked about “time well spent” last year and Twitter is making an effort to develop a “conversational health” measurement as their key counter-metric. This can be combined with behavior-influencing incentive features and systems similar to those found in MMO-RPGs to improve overall user engagement wellness.

[8] Nothing keeps a person in line better than knowing they are being watched. 24-hour surveillance a la China and the correlated drop in crime rates is a particularly dystopian example.

[9] When I worked at Ford Motors as an engineering intern years ago, one of the cardinal sins of the factory was: “Never do anything to stop the assembly line.” Around that time, Toyota’s famed quality popularized some of their manufacturing practices, which included empowering any and all factory employees to hit the emergency stop button if they observed a quality issue on the assembly line. This level of empowerment was (and probably still is) unfathomable in US factories.

[10] “Justice delayed is justice denied.”

[11] Culture attracts like-minded people, both for better or worse. Since people hire people like themselves, once the wrong culture is established, you and your team hire more of the same, making it even harder to improve culture. This is the ultimate culture downward spiral.